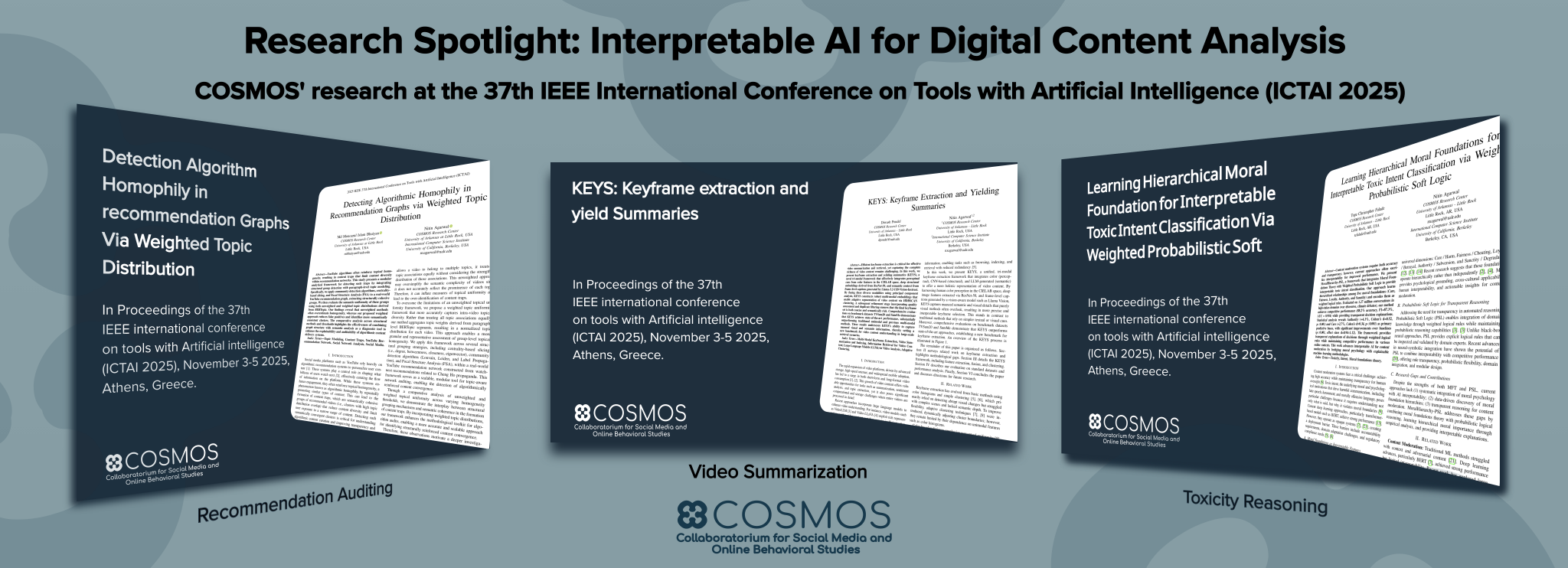

In this month’s research spotlight, COSMOS at UA Little Rock highlights its leadership in socio-computational research through three studies presented at the 37th IEEE International Conference on Tools with Artificial Intelligence (ICTAI 2025), held November 3–5, 2025, in Athens, Greece. These works advance interpretable AI for digital platforms by examining how online systems shape user experience and information exposure. They examine how recommendation systems can confine users to narrow content pathways, introduce a transparent framework for identifying toxic intent in online interactions, and present a tri-modal method for extracting representative keyframes from large-scale video data. Together, they offer new ways to understand how digital content is curated, consumed, and analyzed.

The first study, “Detecting Algorithmic Homophily in Recommendation Graphs via Weighted Topic Distribution,” investigates how YouTube recommendation systems reinforce topical similarity, creating “content traps.” By combining graph-based analysis with weighted topic modeling, it more precisely identifies a network of recommended videos acting as traps. Incorporating content with network structure in trap detection reduces false positives compared with earlier methods, improving system auditability.

The second study, “KEYS: Keyframe Extraction and Yielding Summaries,” tackles a key challenge in large-scale video analysis: selecting a small but representative set of frames for indexing, retrieval, and summarization. Using a tri-modal framework that integrates visual, semantic, and contextual signals, the method enhances the identification of meaningful keyframes and enables more efficient organization of large multimedia collections.

The third study, “Learning Hierarchical Moral Foundations for Interpretable Toxic Intent Classification via Weighted Probabilistic Soft Logic,” focuses on explainable content moderation. It combines Moral Foundations Theory with probabilistic logic to classify toxic intent using transparent rules, demonstrating strong performance across 1.27 million high-stakes online conversations while revealing how moral dimensions such as authority and care contribute to harmful discourse.

Together, these studies emphasize a shared goal: making AI systems more interpretable while applying diverse methodologies across recommendation analysis, multimedia understanding, and computational ethics. They demonstrate how AI can move beyond prediction toward explanation in various application domains, such as auditing algorithmic bias, organizing vast video data, and clarifying the moral dimensions of toxic/hate speech interactions.

Collectively, this work reflects COSMOS’s mission to design AI systems that are robust, scalable, and responsive to the complexities of digital ecosystems. For science, it advances interpretable machine learning and hybrid approaches that blend statistical and symbolic reasoning; for society, it promotes transparency, reduces bias, and supports more accountable digital platforms.