COSMOS at UA Little Rock continues to push the boundaries of socio-computational research, unveiling two groundbreaking studies at the prestigious and highly interdisciplinary 18th International Conference on Social Computing, Behavioral-Cultural Modeling, & Prediction (SBP-BRiMS). Hosted at Carnegie Mellon University, this elite forum brings together global leaders in computer science and social behavioral modeling to address the world’s most pressing digital threats.

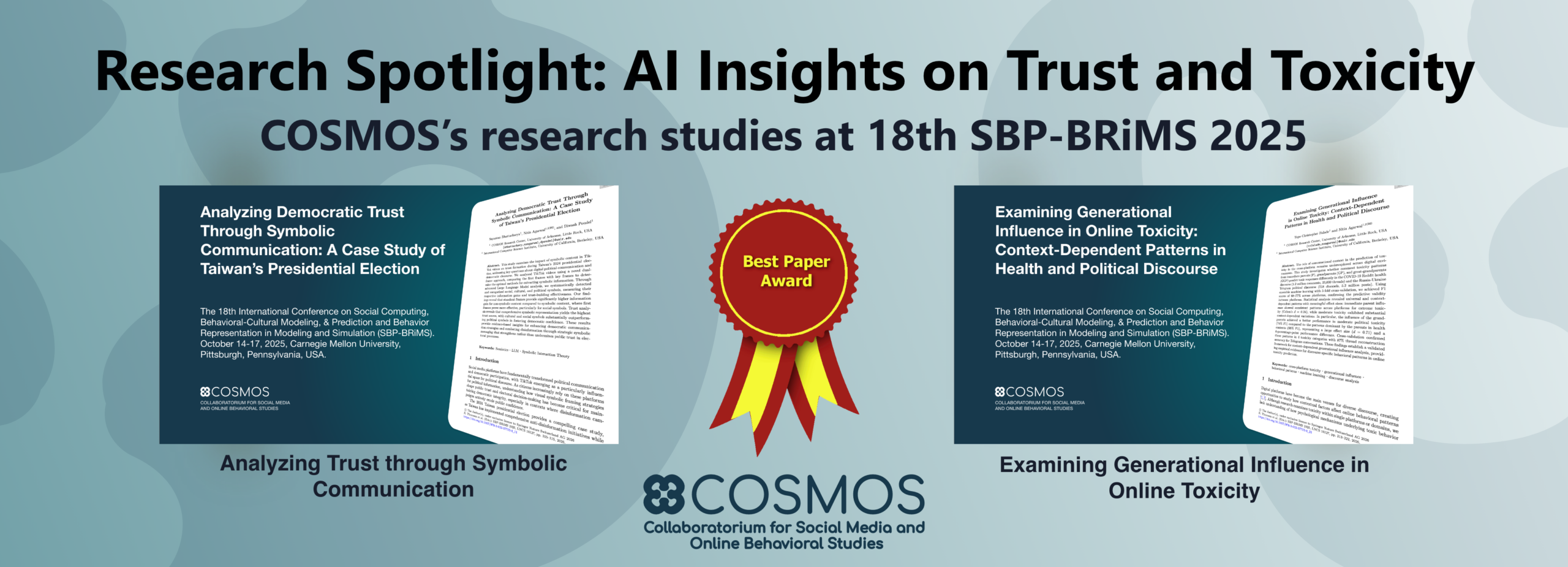

The first study, entitled “Analyzing Democratic Trust Through Symbolic Communication: A Case Study of Taiwan’s Presidential Election,” investigates how symbolic content in TikTok videos influences trust formation during 2024 Taiwan presidential election, using a novel dual-frame method to compare first and key video frames. The analysis shows that first frames are more effective for conveying symbolic content, especially social symbols, while standout frames better capture non-symbolic information, and that cultural and social symbols significantly outperform political symbols in building trust. Overall, the findings suggest that strategically incorporating diverse symbolic elements can enhance democratic communication and strengthen public trust in electoral processes. This study received the best paper award at the conference.

The second study, entitled “Examining Generational Influence in Online Toxicity: Context-Dependent Patterns in Health and Political Discourse,” examines how conversational context, specifically parent, grandparent, and great-grandparent comments, affects the prediction of toxicity across platforms, analyzing large-scale discussions from COVID-19 Reddit health discourse and Russia–Ukraine Telegram political discourse. Using ensemble machine learning, the authors achieved strong predictive performance (F1 scores of 68–77%) and found both universal and context-dependent patterns, with immediate parent influence consistently predicting extreme toxicity while deeper conversational layers (e.g., grandparents) better captured moderate toxicity in political contexts. Overall, the results demonstrate that incorporating generational conversational context significantly improves cross-platform toxicity prediction and reveals discourse-specific behavioral dynamics.

Both studies leverage AI-driven social computing approaches to analyze large-scale social media data, but they differ in focus and methodological emphasis. The TikTok study applies advanced large language models to interpret symbolic visual content and its role in trust formation, highlighting how multimodal AI can decode cultural and social signals in short-form video. In contrast, the cross-platform toxicity study employs ensemble machine learning and conversational thread reconstruction to model how hierarchical context shapes toxic behavior, emphasizing structural and temporal dynamics in online discourse.

Together, these studies demonstrate the power of AI in uncovering nuanced patterns in digital communication, whether through symbolic meaning-making or conversational context modeling. Scientifically, they advance methods for integrating multimodal and contextual data into social computing frameworks; societally, they offer actionable insights for strengthening democratic discourse, improving content moderation, and designing interventions to foster trust while mitigating harmful online behavior.